Over the last few months, I’ve been experimenting with ChatGPT. I even asked it to write this article about how journalists could use ChatGPT and it spewed out 437 words on how it was a tool that could sift through vast amounts of information to uncover the truth. It even created fake story lines about make-believe journalists who used it to report stories that never happened. In short, a fairy tale.

Artificial Intelligence and ChatGPT learn grammar, syntax, semantics, and even some level of reasoning and context to mimic human speech and communications. They suck up existing information on the internet and regurgitate it according to the question you pose.

And we all know information on the internet is true, don’t we?

If there’s a data void, ChatGPT will usually not answer a question by saying it does not know the answer, but it will make up an answer. Unless you are extremely specific—I asked it “what is the future of land use in Cooperstown, Wisconsin” and it did reply As of my last update, I don’t have access to information about the specific future developments or plans for land use in Cooperstown, WI.

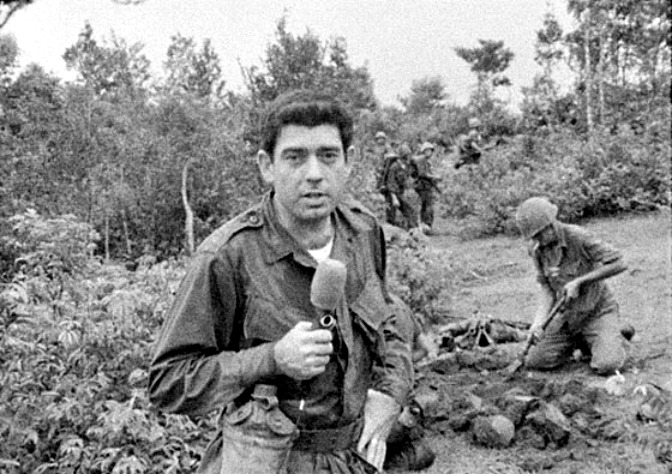

The reality is most journalists are in the business of reporting new information (hence the term “news”). If they’re local reporters, chances are the internet data dump does not have the latest info on the workings of a local school board or a common council or a court proceeding.

So will ChatGPT supplant local journalism? The reality is scooping information off the internet cannot be trusted. We will still need reporters and editors to vet information, double check sources, or to present the human impact of the news.

Just ask ChatGPT—it’ll tell you that AI models “may not be able to fully replace local news.” At least that much is true.

What’s the biggest sale you can think of?

What’s the biggest sale you can think of?